tl;dr:python can turn tedious work into free time!

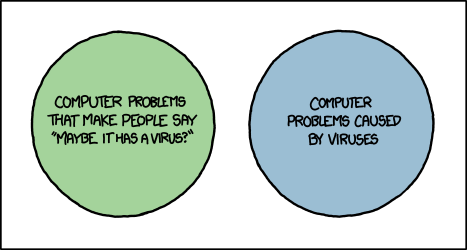

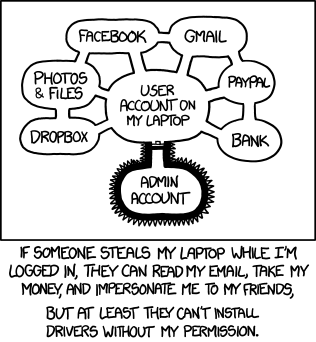

PHP websites were on the rise a few years ago, mainly due to the raise of easy CMS like drupal and joomla. Their main problem is that they carry a high maintenance cost with them compared with an static website. You have to keep them up to date and theres new exploits every other week

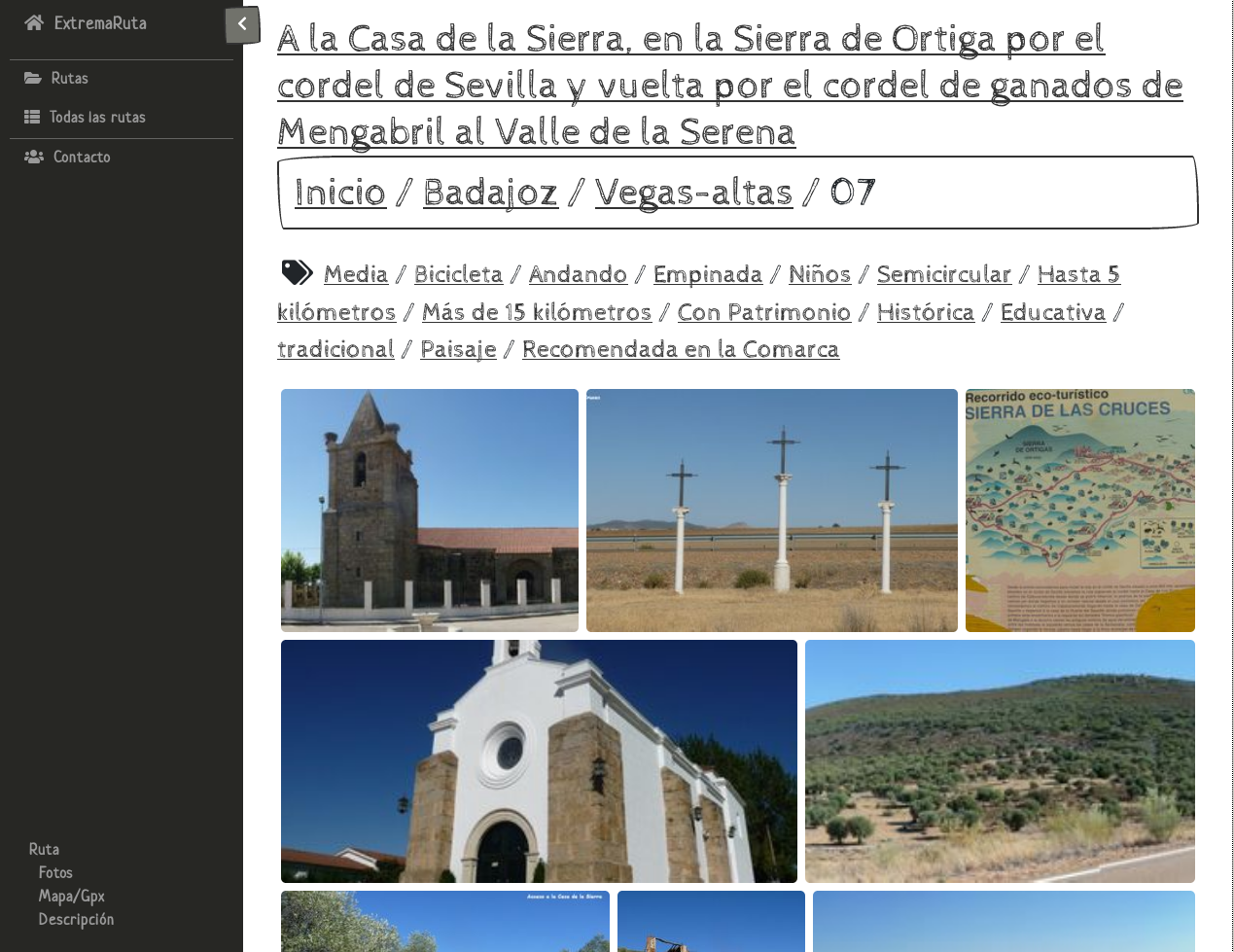

I was presented with this PHP website that had been hacked very long ago and it had to be taken down because there was no way to clean it up and there was no clean copy anywhere. The only reason they were using a PHP website was that it was “easy” upfront but they never really think it throught and they didnt really needed anything dynamic, like users

One of the perks of static websites is that they are virtually impossible to hack and in case they are (probably because something else has been hacked and it gets affected), you can have it up again somewhere else in a matter of minutes

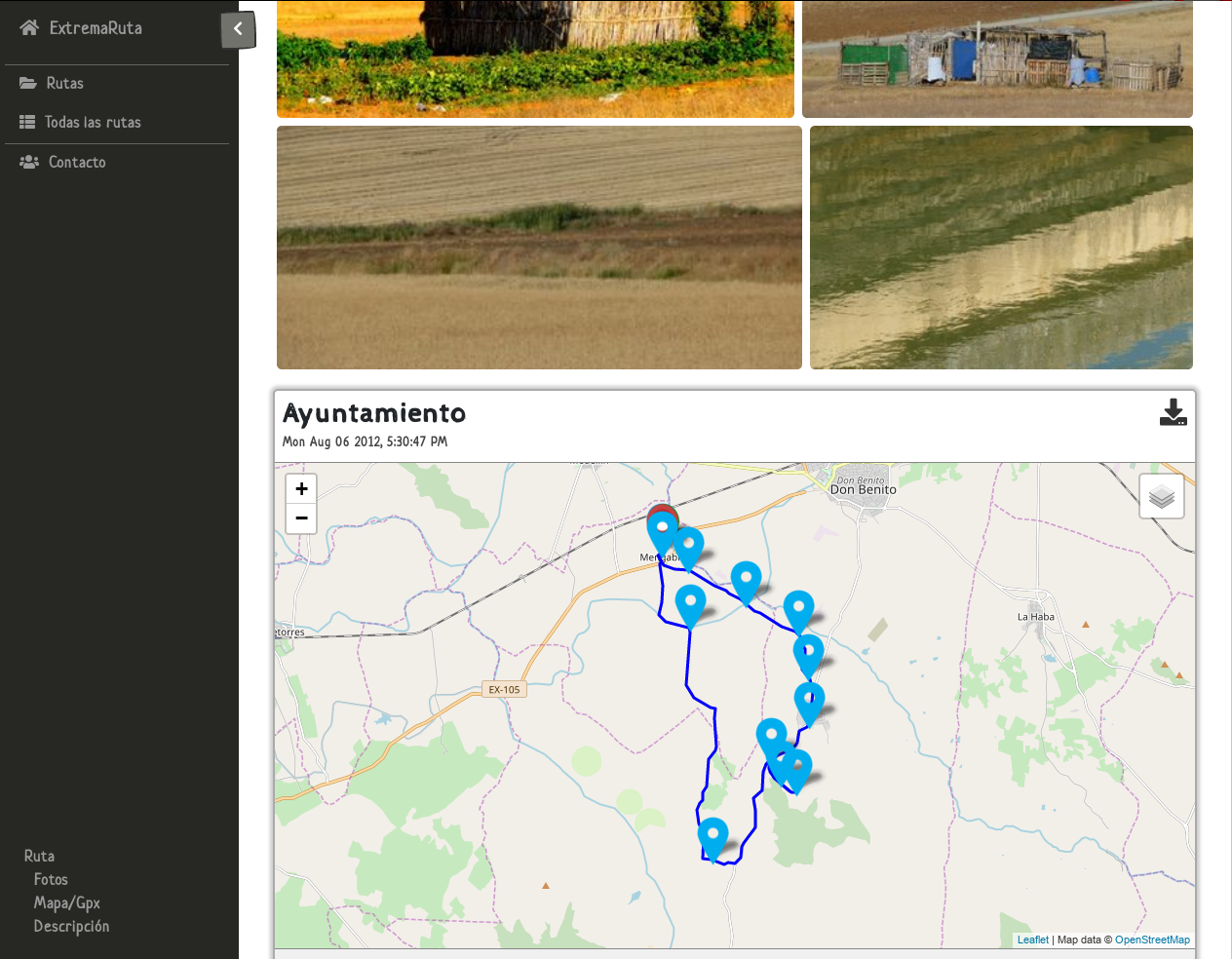

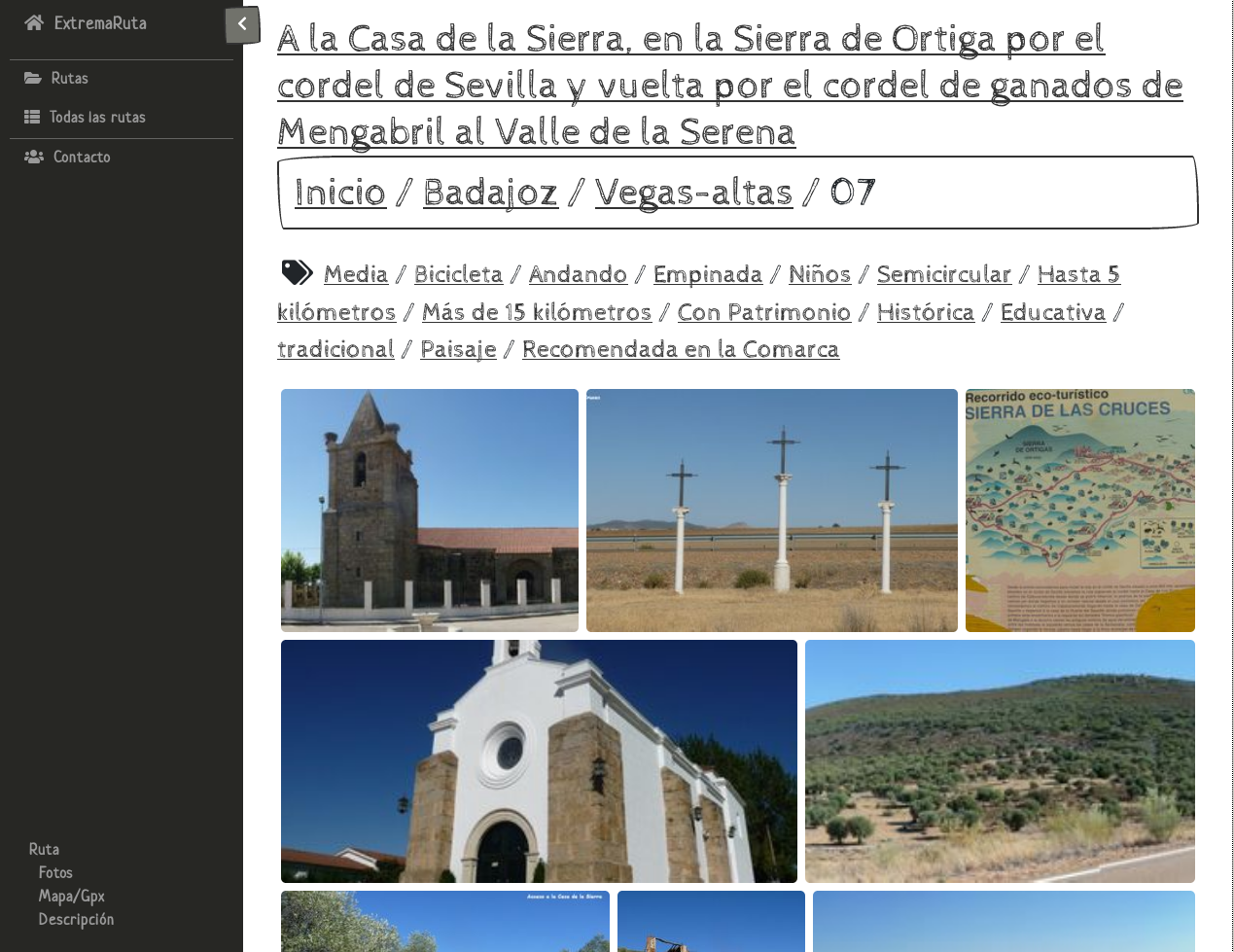

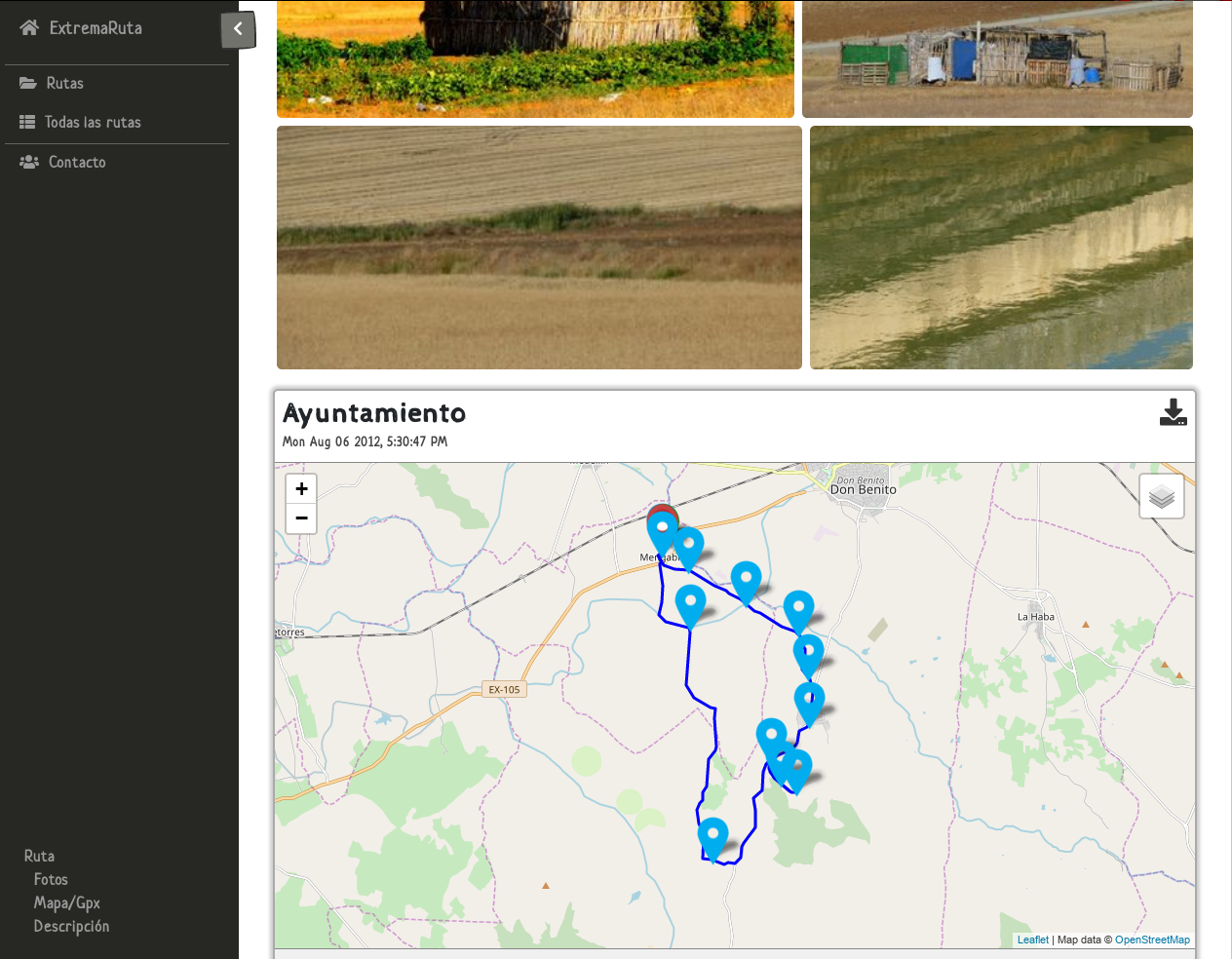

So off we go to turn the original data into a website. I chose my prefered static website generator, pelican, and then wrote a few python scripts that mostly spew markdown (so no not even pelican specific generator!)

It scans a directory with photos, .gpx and .pdf and generates the markdown and figure out where they belong and whats part of the website by the name of the files

The major challenge was to reduce times because theres almost 10Gb of data that have to processed and it would had been very tedious to debug otherwise. Thumbnails have to get generated, watermarks added, decide if something new has been added on the original data, etc… Anything done, has to undergo through 10Gb of data

"""

process.py

Move files around and triggers the different proccesses

in the order it needs to run, both for testing and for production

"""

#!/usr/bin/python3

import routes

from shutil import move

from subprocess import run

from os.path import join, exists

def sync_files():

orig = join(routes.OUTPUT, "")

dest = join(routes.FINAL_OUTPUT, "")

linkdest = join("..", "..", orig)

command = ("rsync", "-ah", "--delete",

"--link-dest={}".format(linkdest), orig, dest)

reallinkdest = join(dest, linkdest)

if(exists(reallinkdest)):

#print("{} exists".format(reallinkdest))

run(command)

else:

print("{} doesnt exist".format(reallinkdest))

print("its very likely the command is wrong:\n{}".format(command))

exit(1)

def test_run():

f = '.files_cache_files'

if(exists(f)):

#move(f, 'todelete')

pass

r = routes.Routes("real.files")

# print(r)

r.move_files()

r.generate_markdown()

sync_files()

def final_run():

r = routes.Routes("/media/usb/web/")

# print(routes)

r.move_files()

r.generate_markdown()

sync_files()

test_run()

# final_run()

#!/usr/bin/python3

"""

routes.py

Generate the different information and intermediate cache files so it doesnt

have to process everything every time

"""

try:

from slugify import slugify

except ImportError as e:

print(e)

print("Missing module. Please install python3-slugify")

exit()

from pprint import pformat

from shutil import copy

from os.path import join, exists, basename, splitext

import os

import re

import json

# original files path

ORIG_BASE = "/media/usb0/web/"

ORIG_BASE = "files"

ORIG_BASE = "real.files"

# relative dest to write content

OUTPUT = join("content", "auto", "")

# relative dest pdf and gpx

STATIC = join("static", "")

FULL_STATIC = join("auto", "static", "")

# relative photos dest

PHOTOS = join("photos", "")

# relative markdown dest

PAGES = join("rutas", "")

# relative banner dest

BANNER = join(PHOTOS, "banner", "")

# absolute dests

BASE_PAGES = join(OUTPUT, PAGES, "")

BASE_STATIC = join(OUTPUT, STATIC, "")

BASE_PHOTOS = join(OUTPUT, PHOTOS, "")

BASE_BANNER = join(OUTPUT, BANNER, "")

TAGS = 'tags.txt'

# Where to copy everything once its generated

FINAL_OUTPUT = join("web", OUTPUT)

def hard_link(src, dst):

"""Tries to hard link and copy it instead where it fails"""

try:

os.link(src, dst)

except OSError:

copy(src, dst)

def sanitize_name(fpath):

""" returns sane file names: '/á/b/c áD.dS' -> c-ad.ds"""

fname = basename(fpath)

split_fname = splitext(fname)

name = slugify(split_fname[0])

ext = slugify(split_fname[1]).lower()

return ".".join((name, ext))

class Routes():

pdf_re = re.compile(r".*/R(\d{1,2}).*(?:PDF|pdf)$")

gpx_re = re.compile(r".*/R(\d{1,2}).*(?:GPX|gpx)$")

jpg_re = re.compile(r".*/\d{1,2}R(\d{1,2}).*(?:jpg|JPG)$")

banner_re = re.compile(r".*BANNER/Etiquetadas/.*(?:jpg|JPG)$")

path_re = re.compile(r".*PROVINCIA DE (.*)/\d* (.*)\ (?:CC|BA)/.*")

def __getitem__(self, item):

return self.__routes__[item]

def __iter__(self):

return iter(self.__routes__)

def __str__(self):

return pformat(self.__routes__)

def __init__(self, path):

self.__routes__ = {}

self.__files__ = {}

self.fcache = ".files_cache_" + slugify(path)

if(exists(self.fcache)):

print(f"Using cache to read. {self.fcache} detected:")

self._read_files_cache()

else:

print(f"No cache detected. Reading from {path}")

self._read_files_to_cache(path)

def _init_dir(self, path, create_ruta_dirs=True):

""" create dir estructure. Returns True if it had to create"""

created = True

if(exists(path)):

print(f"{path} exist. No need to create dirs")

created = False

else:

print(f"{path} doesnt exist. Creating dirs")

os.makedirs(path)

if(create_ruta_dirs):

self._create_ruta_dirs(path)

return created

def _create_ruta_dirs(self, path):

"""Create structure of directories in <path>"""

for prov in self.__routes__:

prov_path = join(path, slugify(prov))

if(not exists(prov_path)):

os.makedirs(prov_path)

for comar in self.__routes__[prov]:

comar_path = join(prov_path, slugify(comar))

if(not exists(comar_path)):

os.makedirs(comar_path)

# Special case for BASE_PAGES. Dont make last ruta folder

if(path != BASE_PAGES):

for ruta in self.__routes__[prov].get(comar):

ruta_path = join(comar_path, ruta)

if(not exists(ruta_path)):

os.makedirs(ruta_path)

def _read_files_cache(self):

with open(self.fcache) as f:

temp = json.load(f)

self.__routes__ = temp['routes']

self.__files__ = temp['files']

def _read_files_to_cache(self, path):

"""read files from path into memory. Also writes the cache file"""

"""also read tags"""

for root, subdirs, files in os.walk(path):

for f in files:

def append_ruta_var(match, var_name):

prov, comar = self._get_prov_comar(root)

ruta = match.group(1).zfill(2)

var_path = join(root, f)

r = self._get_ruta(prov, comar, ruta)

r.update({var_name: var_path})

def append_ruta_pic(match):

prov, comar = self._get_prov_comar(root)

ruta = match.group(1).zfill(2)

pic_path = join(root, f)

r = self._get_ruta(prov, comar, ruta)

pics = r.setdefault('pics', list())

pics.append(pic_path)

def pdf(m):

append_ruta_var(m, 'pdf_orig')

def gpx(m):

append_ruta_var(m, 'gpx_orig')

def append_banner(m):

pic_path = join(root, f)

banner = self.__files__.setdefault('banner', list())

banner.append(pic_path)

regexes = (

(self.banner_re, append_banner),

(self.pdf_re, pdf),

(self.gpx_re, gpx),

(self.jpg_re, append_ruta_pic),

)

for reg, func in regexes:

try:

match = reg.match(join(root, f))

if(match):

func(match)

break

# else:

# print(f"no match for {root}/{f}")

except Exception:

print(f"Not sure how to parse this file: {f}")

print(f"r: {root}\ns: {subdirs}\nf: {files}\n\n")

self._read_tags()

temp = dict({'routes': self.__routes__, 'files': self.__files__})

with open(self.fcache, "w") as f:

json.dump(temp, f)

def _read_tags(self):

with open(TAGS) as f:

for line in f.readlines():

try:

ruta, short_name, long_name, tags = [

p.strip() for p in line.split(":")]

prov, comar, number, _ = ruta.split("/")

r = self._get_ruta(prov, comar, number)

r.update({'short': short_name})

r.update({'long': long_name})

final_tags = list()

for t in tags.split(","):

final_tags.append(t)

r.update({'tags': final_tags})

except ValueError:

pass

def _get_prov_comar(self, path):

pathm = self.path_re.match(path)

prov = pathm.group(1)

comar = pathm.group(2)

return prov, comar

def _get_ruta(self, prov, comar, ruta):

"""creates the intermeidate dics if needed"""

prov = slugify(prov)

comar = slugify(comar)

p = self.__routes__.get(prov)

if(not p):

self.__routes__.update({prov: {}})

c = self.__routes__.get(prov).get(comar)

if(not c):

self.__routes__.get(prov).update({comar: {}})

r = self.__routes__.get(prov).get(comar).get(ruta)

if(not r):

self.__routes__.get(prov).get(comar).update({ruta: {}})

r = self.__routes__.get(prov).get(comar).get(ruta)

return r

def move_files(self):

"""move misc (banner) and ruta related files (not markdown)"""

"""from dir to OUTPUT"""

self._move_ruta_files()

# misc have to be moved after ruta files, because the folder

# inside photos prevents ruta photos to be moved

self._move_misc_files()

def _move_misc_files(self):

if (self._init_dir(BASE_BANNER, False)):

print("moving banner...")

for f in self.__files__['banner']:

fname = basename(f)

dest = slugify(basename(f))

hard_link(f, join(BASE_BANNER, sanitize_name(f)))

def _move_ruta_files(self):

"""move everything ruta related: static and photos(not markdown)"""

create_static = False

create_photos = False

if (self._init_dir(BASE_STATIC)):

print("moving static...")

create_static = True

if (self._init_dir(BASE_PHOTOS)):

print("moving photos...")

create_photos = True

for prov in self.__routes__:

for comar in self.__routes__[prov]:

for ruta in self.__routes__[prov].get(comar):

r = self.__routes__[prov].get(comar).get(ruta)

fbase_static = join(

BASE_STATIC, prov, slugify(comar), ruta)

fbase_photos = join(

BASE_PHOTOS, prov, slugify(comar), ruta)

def move_file(orig, dest):

whereto = join(dest, sanitize_name(orig))

hard_link(orig, whereto)

if(create_static):

for fkey in ("pdf_orig", "gpx_orig"):

if(fkey in r):

move_file(r[fkey], fbase_static)

if(create_photos and ("pics") in r):

for pic in r["pics"]:

move_file(pic, fbase_photos)

def generate_markdown(self):

"""Create markdown in the correct directory"""

self._init_dir(BASE_PAGES)

for prov in self.__routes__:

for comar in self.__routes__[prov]:

for ruta in self.__routes__[prov].get(comar):

r = self.__routes__[prov].get(comar).get(ruta)

pages_base = join(

BASE_PAGES, prov, slugify(comar))

fpath = join(pages_base, f"{ruta}.md")

photos_base = join(prov, slugify(comar), ruta)

static_base = join(

FULL_STATIC, prov, slugify(comar), ruta)

with open(fpath, "w") as f:

title = "Title: "

if('long' in r):

title += r['long']

else:

title += f"{prov} - {comar} - Ruta {ruta}"

f.write(title + "\n")

f.write(f"Path: {ruta}\n")

f.write("Date: 2018-01-01 00:00\n")

if('tags' in r):

f.write("Tags: {}".format(", ".join(r['tags'])))

f.write("\n")

f.write("Gallery: {photo}")

f.write(f"{photos_base}\n")

try:

fpath = join("/", static_base, sanitize_name(r['pdf_orig']))

f.write( f'Pdf: {fpath}\n')

except KeyError:

f.write('Esta ruta no tiene descripcion (pdf)\n\n')

try:

fpath = join("/", static_base, sanitize_name(r['gpx_orig']))

f.write(f"Gpx: {fpath}\n")

except KeyError:

f.write('Esta ruta no tiene coordenadas (gpx)\n\n')

if('pics' not in r):

f.write('Esta ruta no tiene fotos\n\n')

if __name__ == "__main__":

routes = Routes(ORIG_BASE)

# print(routes)

print("done reading")

routes.move_files()

routes.generate_markdown()

print("done writing")